US Library of Congress

Machine learning model for automated cataloguing (concept prototype)

Using Large Language Models (LLMs) and out-of-copyright books as training data to enhance cataloging at scale.

Summary

6,400

Titles in the training dataset

92%

Maximum accuracy obtained (model with a specific-theme dataset)

362

Titles selected at random to evaluate each method

My role

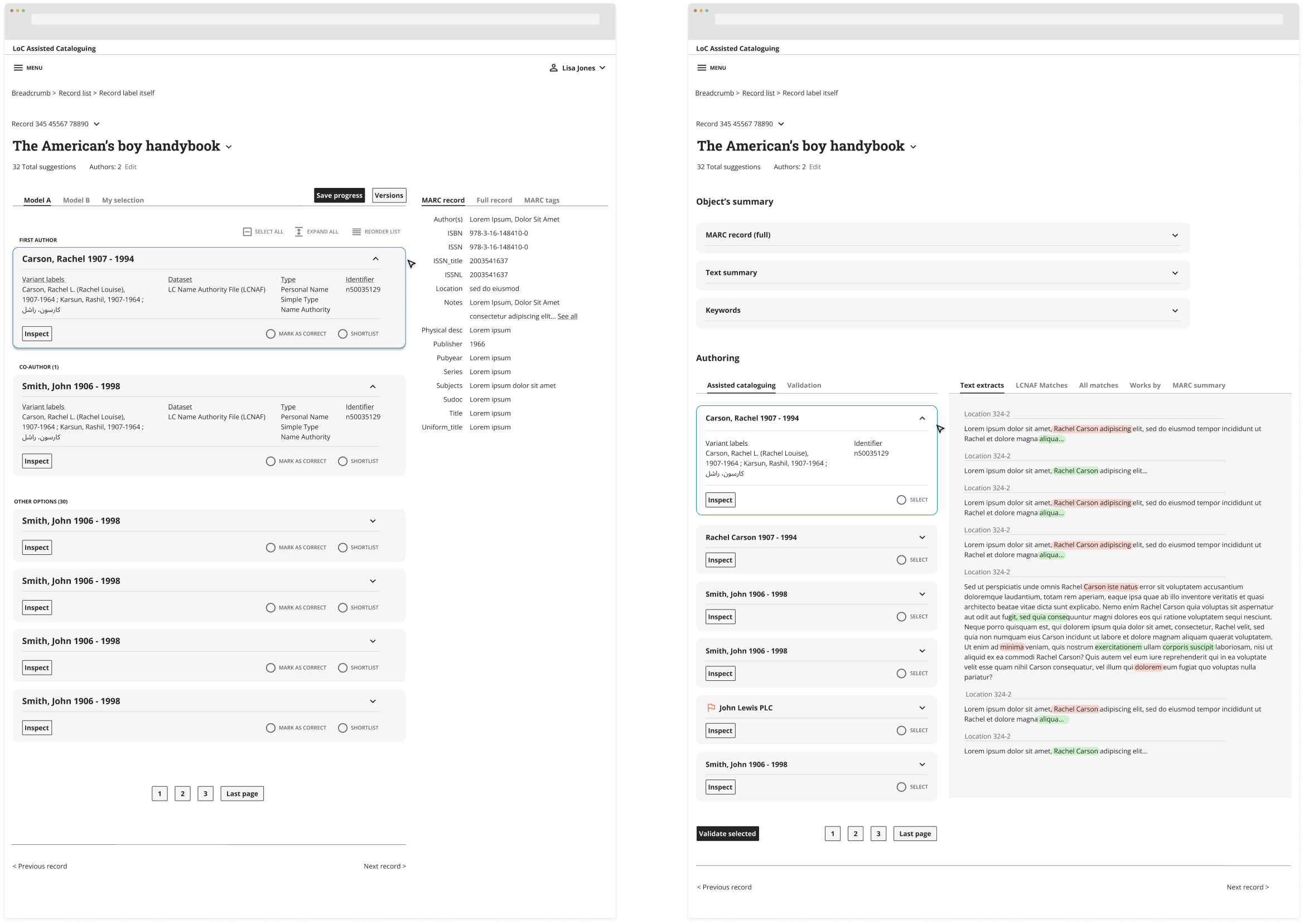

- Prototyping of the interface

- Interaction patterns.

- Information architecture.

Thanks to

Finlay McCourt (Machine Learning Enginneer).

Links

The US Library of Congress routinely runs experiments to develop future service and system improvements.

This series of experiments, run by the cataloguing office, was about exploring automated cataloguing of new e-books entering the LOC system.

- The goal was validating whether older (out of copyright) books could be turned into the right training and test material for the extraction of metadata from modern open access ebooks.

By training a sample via machine learning, we found an effective way to automate the main aspects of cataloging by title and by author.

We worked to:

- Optimise the main combinatons of algorithm inputs needed to establish an acceptable level of accuracy that would actually help the manual work of human Cataloguers at the LOC.

- Develop an interface skeleton that would work in terms of hierarchy and layout, to extract this information optimally.

One of the main challenges was that cataloging rules are hard to standardise and apply in a cookie-cutter way.

Traditional sorting methods may work well for some fields such as ISBNs, but aren't able to cope well with irregularities or inconsistencies in bibliographic information.

We wanted to test the performance of metadata extraction using OCR (optical character recognition) generated text and check whether historic data (its metadata) could work for modern texts.

- For one dataset, image data for the first four to seven pages of each title was used as input for the OCR process.

- For the other, 50 records were randomly selected, and from each used the first 3,000 words of plaintext, using AI (Document Text Detection in Google Vision).

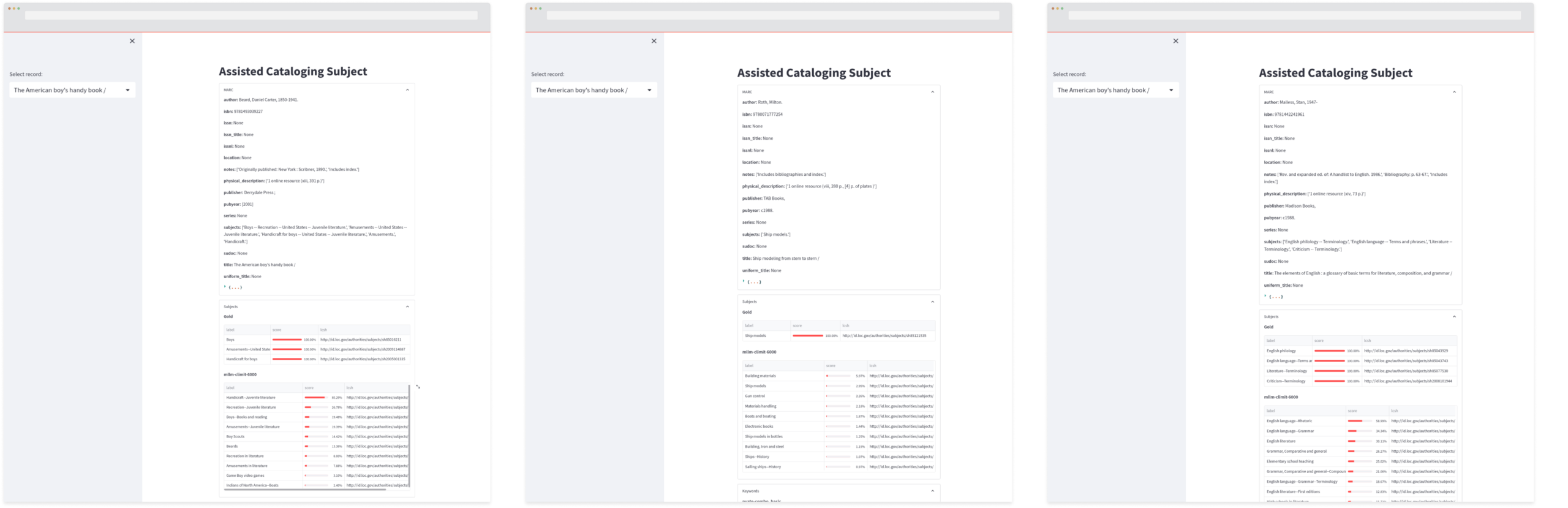

Targeting mainly Title, Subtitle, Publisher and publication year as datapoints, the Development team evaluated multiple AI methods (Large Language Model-based approaches) to get bibliographic metadata from the Directory of Open Access Books (DOAB).

Conclusions:

- Fine-tuning the source material (random sample vs. specifially-themed titles) did make a slight difference in accuracy, ranging from 86% to 92% (in the best of cases).

- The more modern the dataset material, the better it is for data extraction (better, more streamlined and standardised information, along with fewer errors).

alexnogues.com 2025-26